I journal because I have an inner monologue that needs somewhere to go. Writing it down keeps me grounded. Reading it back, occasionally, helps me notice patterns I'd miss in the moment. I've been doing it for years, on and off, in notebooks and notes apps and the occasional voice memo I forget to listen to.

The problem with journaling is that the journals just sit there. You write something down, it leaves your head, and that's where it stays. The pages stack up. The patterns hide in plain sight. The thing you wrote three weeks ago, the one that would have helped you understand what you're feeling today, is buried under thirty other entries you also haven't reread.

I've thought about therapy. Therapy is good. Therapy is also clinical in a way that didn't fit what I was looking for. I didn't need diagnostic language or coping strategies. I just wanted to hear my own thoughts, pulled together, in my own voice. I wanted somebody to read what I'd written and say back to me, gently, here's what you've been talking about this week.

So I built it.

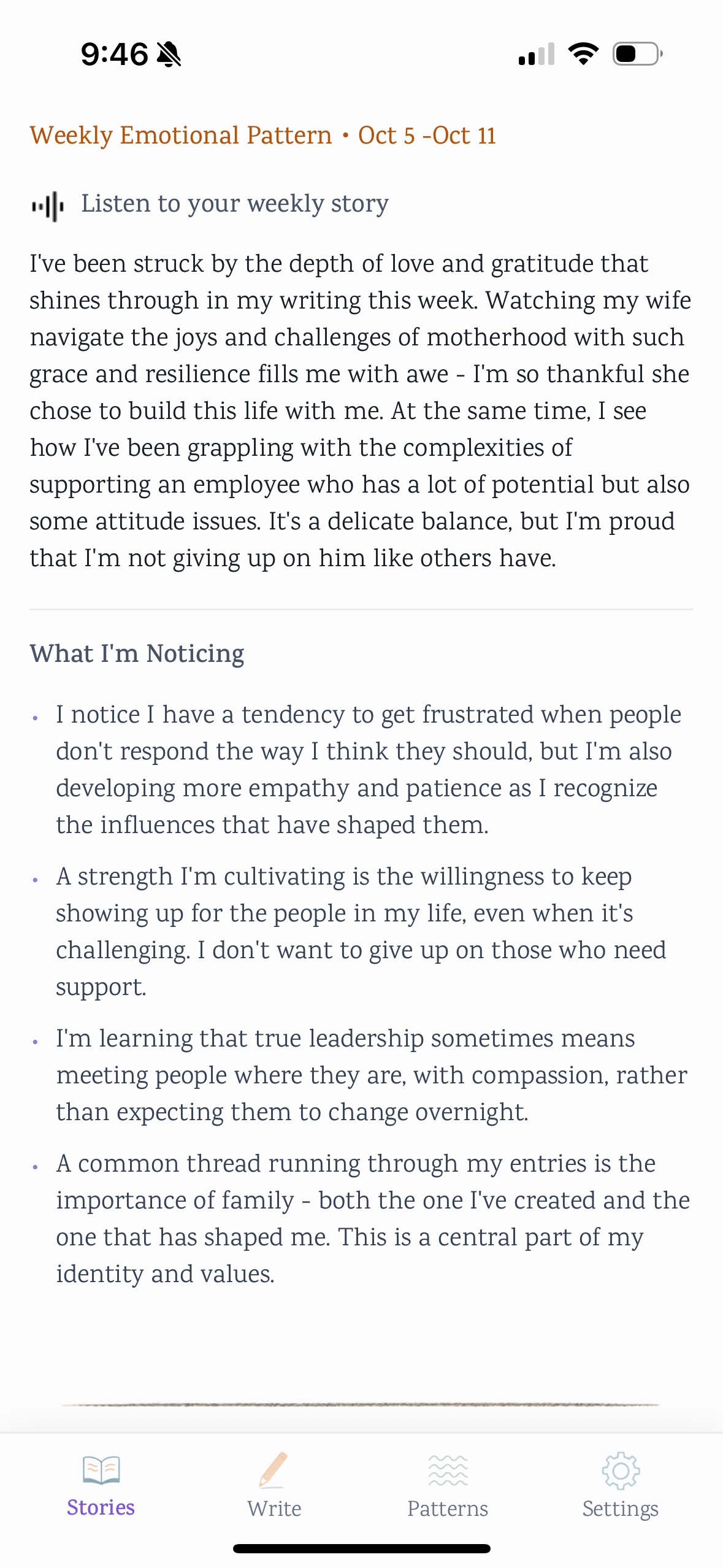

The app is called Dear Self. You journal daily, the way you would in any notebook. Oe a week, Claude reads what you wrote and gives you back a short narrative recap, structured like a story, told in your voice. Not summarized. Not analyzed. Pulled together.

The choice to use Claude wasn't technical. It was about trust.

Journaling content is the most personal data a person produces. Half-formed thoughts, things you're still working through, things that would feel different the second you said them out loud. If I'm going to put that into a model, I need to know the company behind the model has thought hard about what it does with personal content, what it surfaces, what it withholds, how it handles the moments when a user might be writing something fragile. Anthropic thinks more seriously about that than anyone else I've read. So I picked Claude.

The choice of model is a design decision. The values of the company behind it are part of the product.

The thing I didn't expect was what designing for journaling would teach me about restraint. Most AI features I've designed at work are about doing more. Generate a draft. Surface a pattern. Recommend an action. Dear Self had to do less. The temptation was to add sentiment analysis, mood tracking, suggested prompts, gentle nudges to write more often. I cut all of it. The user's voice was the only voice that mattered. The AI's job was to listen carefully and then quietly hand the user's own words back to them, with structure, in a way that helped them recognize what they'd been saying.

That restraint is the design. It's also, I think, the reason Claude was the right model. A model that wants to help too much would have ruined it.

I made the app for myself. About 10–15 people tested it through TestFlight. I'm a designer, not a marketer, so I didn't try to grow it past that. But the building taught me something I now bring back to my day job: when you design AI for personal context, the question isn't what the AI can do. It's what the AI should refuse to do. The right answer is usually less than you think.

I used it for months. The Sunday recap usually showed me what I'd been writing about without realizing it. I'm not using it now. Building it taught me what I needed to learn, which was the point.